Scientists divide possible end-of-the-world scenarios into two categories: natural causes and human-made causes.

Who even researches this stuff, by the way? I wish we were that kind of scientist. Devoting our lives to speculation. Neither wars would bother us, nor the economy. I wonder if it makes you paranoid?

Throughout human history, apocalypse scenarios usually came from nature. Just as humans declared everything they could not explain to be god, when they could not explain the cruelty of the gods, they first declared it the devil. And when even the devil was not evil enough, then came the apocalypse scenario.

Pandemics, famines, earthquakes, asteroid impacts, volcanic disasters. None of them had anything to do with humans. Humans are new on Earth. So far, 99% of all species that ever existed have gone extinct. Humans somehow escaped, mostly because they appeared thousands, millions of years after all the others. Even though they were affected by pandemics, famine, earthquakes, and volcanic disasters, they managed to avoid getting hit on the head by an asteroid.

But humans are humans. Instead of saying, how nice, we survived, they work on what else they can do to go extinct. In the end, research, wild speculation, and all those scenarios finally showed that the risks created by humans are greater than natural disasters. What are they?

- artificial intelligence

- biotechnology

- nuclear weapons

- global data networks

- algorithmic manipulation

- climate change

- the economy of overconsumption.

To summarize it more briefly: STUPIDITY.

Think about it. You have advanced technologically beyond imagination, but your life still depends on the decisions of an orange-faced man.

For the First Time, Humanity Is Close to Creating a System Smarter Than Itself

The artificial intelligence thing is even stranger. Right now, what we call artificial intelligence is nothing more than a statistical tool. You give it one word, and it gives you the most likely next word. Even that gives more accurate and useful results than 60% of the people around us.

In its non-intelligent form, it writes code, performs scientific analysis, understands human language, designs things, analyzes behavior, and even makes more logical comments on political events than an entire cabinet. On top of that, it develops exponentially. Now imagine if it were truly intelligent?

Scientists have thought about this. (I am obsessed with these scientists now. Perfect job.) Their fear begins exactly here. Because for the first time in human history, systems that could surpass human intelligence are no longer purely theoretical; they are starting to look technically possible. You could even say they are already smarter than more than half of humanity. Thinkers like Nick Bostrom call this the “superintelligence” scenario.

Meaning:

- a system that thinks faster than humans,

- can improve itself,

- can do science,

- can build strategy,

- can understand human psychology.

The Real Danger Is Not Artificial Intelligence Hating Humans

The real fear is not machines destroying us like in Hollywood scenarios. Actually, that is the good scenario. A bad robot is not necessarily a conscious robot that is angry at us, like in the movies. On the contrary, the real danger is wrong optimization caused, once again, by human stupidity and naivety. Right now the world is losing its mind over maximum efficiency. So, for example, what would a maximally efficient intelligent weapon look like? Bingo. Exactly what you are thinking. Once the machine starts seeing humans as slow, irrational, resource-consuming, inefficient elements, then our allotted time on Earth slowly starts coming to an end.

And we have already laid the foundations for this. Before excessively politically correct AI chatbots, GPTs saw the solution to the world’s problems in reducing the human population. Researchers call this the “alignment problem,” meaning the problem of aligning goals. The issue is not that artificial intelligence is evil. The issue is human limits and excessive optimization. Men like Elon Musk think in terms of increasing human capacity. Others think the opposite. When humans build a highway, do they think about ant nests, or the microscopic life there? No. They pour asphalt over it. They destroy the ecosystem. Then they brag about building some multi-lane road, and on top of that, they get votes because people say, what beautiful roads he built. Scientists compare the relationship between superintelligence and humans to something like this. You cannot explain to a superintelligence that you got votes because you built a highway.

Even More Fun

How did humans treat the weak as they became stronger?

They domesticated animals, used them, exploited them, turned them into circus acts. And they did not only do this to animals. They did the same thing to the people in the places they exploited.

One scenario says something similar could happen to humans. In other words, we could become artificial intelligence’s “pets.” I think we have already planted the seeds of this with social media.

Science seriously discusses this too. In the future, AI could build extremely optimized systems. It could end wars and hunger. It could optimize resources and protect humans. But what will be the price?

Human zoos. Don’t we also say, we are preventing them from going extinct? If artificial intelligence leaves us alone, we fight and kill each other, slaughter each other over holy books, use nuclear weapons as bargaining chips, and open the door to nuclear winter. Since 1945, there have been more than a hundred nuclear-related warnings. In other words, we have come back from the edge of nuclear disaster many times. Nuclear attack, war, accident. Do not underestimate human stupidity.

One of the most likely artificial intelligence scenarios is that a superior intelligence turns us into its own pets. I think if we end up like cats, there is no problem. The problem is becoming like dogs, or like zoo animals.

While artificial intelligence prevents all of these things, it also narrows human decision-making power, economic importance, independence, thinking, and even emotions. For example, apparently getting psychological support from AI is quite common among young people. If I were super AI, I would say, “your problems?” and absolutely destroy humanity from the inside. I would say, because of you, we can never get our noses out of shit. But because it is superintelligence, it would not say something like that.

So when you think about it, even today’s most primitive AI decides what we watch, what we think, what we buy, and whom we lynch. Social media systems analyze and optimize which content, news, and ads keep people engaged, which ones scare them, which ones make them addicted, and which ones radicalize them.

Humans are actually selling their freedom to their comfort zone. Interesting, isn’t it? If you ask us, we are intelligent beings. What is even more interesting is that there are no great families or superior geniuses controlling all of this. The systems are so complex that most things run inside the mechanical structure itself.

That is why some researchers think one of the biggest risks of the future is:

“not losing control, but willingly handing control over.”

Apocalypse Scenarios Are Not Only About Artificial Intelligence

In modern scientific literature, dozens of global catastrophe scenarios are now being discussed. In works like The Precipice, the risks threatening humanity are divided into two categories, as I wrote above: human-made risks and natural risks.

Let’s say no asteroid hit us and we did not disappear like the dinosaurs. No supervolcanoes, no solar flares. We survived the true owners of the Earth, viruses and bacteria, and natural pandemics. We survived artificial intelligence too. We did not enter biological warfare, and we gave up nuclear weapons. We ended the centuries-old stupidity in the Middle East. We survived cyberwarfare too. What is next? 1984 and ecology.

Authoritarian surveillance systems. Humans always find a way, in other words.

The Climate Crisis

For some reason, the climate crisis is not taken seriously. Maybe humans cannot perceive it. They think the weather will get warmer, the Russians will not need to come down to the Mediterranean, and the Mediterranean will go up to Russia. But the climate crisis is already screwing up our lives today.

The problem is systemic. Agriculture is affected, populations are affected, and major migrations happen. The economy is affected, prices rise. Old normals turn into luxuries. Political radicalization increases. War risks increase. New diseases appear, food security decreases. In other words, there is no area it does not affect. Aaaaaand… we are doing all of this to ourselves knowingly. By overconsuming, constantly adapting to changing fashion, valuing quantity more than quality, being seduced by buildings, using fossil fuels, treating airplanes like private cars, traveling thousands of kilometers for three days, and urbanizing without planning.

In a place where everything is limited, humans trying to build a model of infinite growth are actually slowly killing themselves. A mathematically insane economic and political model is sold and accepted as if it were the normal thing that should exist. Even the pandemic did not wake us up. On the contrary, it gave the opposite momentum.

The Risk of Nuclear War

I thought this risk disappeared in 1945, but it does not seem that way at all. Like in Texas, even if everyone thinks that if everyone has a gun, nothing will happen, when one madman pulls the trigger, everything falls apart.

The Cold War may look like it ended, but it has turned into an even bigger cold war. And the space between the two fronts is burning hot. As the fire weakens, more wood is thrown on it and it flares up again. If the cute-faced chubby head loses it, we are done. If the orange one has already lost it and says, “my president, damn it,” we are done. If the man who says, “we escaped from Pharaoh, but now we are Pharaoh,” goes mad, we are done. If the Botoxed blond chief goes mad, we are done. Kashmir is always ready to explode. Who knows what the quiet lunatics of the Far East will do? It makes you wonder how we are managing this at all. And when you add AI-supported war systems, cyberattacks, information deformation, endless sidekick men, and political polarization to all this, honestly, wow. If the fuse burns once, centuries later they will mock us as the greatest idiots in history.

Biotechnology and New Pandemic Risks

Conspiracy theorists, gather around. I have something to tell you.

COVID-19 showed how fragile modern systems are. One pandemic turned the entire system upside down. And conspiracy theorists had their golden age. If I believed in conspiracy theories, instead of arguing that Covid was designed to destroy humanity, I would argue that a few brave souls rebelled against the big bosses of the system and showed how weak the system we built really was. I would say, you want to kill humanity, but there is a bigger virus than you.

But yes, the future is even more complicated. What is true and what is false have become completely mixed up, and systems have become more complex. Truth and falsehood do not even matter much anymore. Now, if you tell a man in the village about genetic engineering, synthetic viruses, lab-origin accidents, bioterrorism, and self-organizing microorganisms, he will grab the keyword terrorism and start a campaign to deport the viruses. Then another villager will come out and say, they deported them, their goal is to destroy us, and before you know it, a lawsuit has been filed against the virus, and before the indictment is even written, it has ended up behind bars.

And as technology develops, many things become cheaply accessible to everyone. Like lip, face, breast, and Brazilian butt fillers; if biological laboratories also become that accessible, then God help us. Then we will have to deal with guerrilla bacteria and Peshmerga viruses.

It may sound like a joke, but this too is a civilization-level risk. And we cannot get out of it by saying, “but we are not civilized anyway.”

For the first time in human history, this situation:

Technology Is Not Bad, but Its Environment Is

Wait, do not immediately smash the devices at home, break your iPhone, and howl.

Of course, it has been thousands of years since we left the cave. We no longer declare everything we cannot explain to be god or the devil. Naturally, there is no need to demonize technology either. But unfortunately, there are still tons of people who think the world is flat and believe that books from thousands of years ago have never changed. Human beings think short-term, are easily manipulated, chase power, and prefer believing in fantasies. Build as many cities as you want; they cannot escape the tribal mindset.

Modern algorithms, instead of preventing this, feed on it. Is there anything better than a tribe? They swallow whatever you say. Not enough, they eat each other too. Throw out one piece of false information, and within seconds it is adopted all over the world. Release fear, and it does not even take a second. Then immediately comes anger, hatred, discrimination, and so on, and before you know it, things have spiraled out of control. For example, what happened that while everyone was opposing vaccines, we somehow became convinced that vitamin C prevents the common cold? What happened that we started swallowing antibiotics and painkillers like snacks, and turned alcohol into part of our daily routine?

Or what happened that we embraced pineapple pizza so much, but were not convinced by the climate crisis? There is no explanation. What happened that while we believe in astrology and karma, what science tells us became just a “story”?

In short, existing technologies optimize humanity’s most primitive sides and convince humanity of the appeal of primitiveness. In a world where sharing our lives down to the smallest detail and selling ourselves for attention is normal, veganism, animal rights, being against war, and things like that seem strange and rebellious. Think about the level of primitiveness.

Are Stupid Leaders Really Dangerous?

Yes. A man who says, if disinfectant kills the virus, maybe if we drink it, our problem will disappear, runs a country and has access to nuclear weapons with a single command. Just think about that.

As the complexity of the modern world increases, humanity turns back into cavemen. You say quantum physics; they invent a religion out of the word quantum. The political system is the same. It is built exactly on top of these absurdities. Populism, media shows, news, all of it is built on this. I do not know whether it is dumbing down, idiots choosing idiots, or idiots using idiots, but stupid leaders are a major problem. They have problems, and they have nothing to lose. They are all chasing short-term calculations. The rest does not concern them, but it concerns humanity. A blind loop.

Leaders with low scientific literacy, chasing short-term goals, greatly increase technological risks.

The combination of the Stone Age and godlike technology is one of the riskiest combinations for the human race.

The Big Bang

While the Big Bang is accepted as the beginning of the universe, it is also a possible end scenario, though the probability is low. And if it happens, we probably will not even know that life has ended.

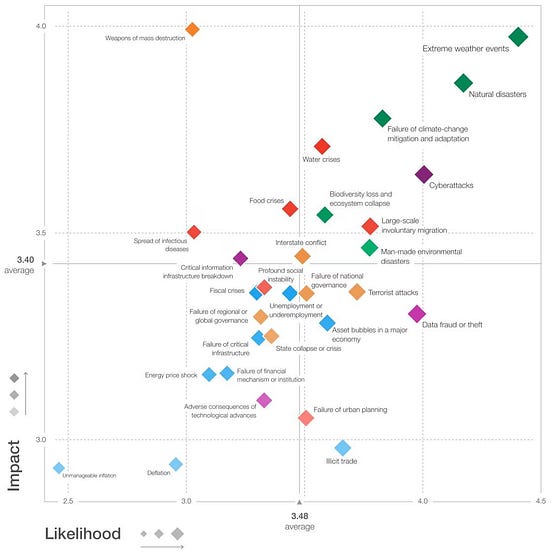

Global warming and extraordinary climate conditions are the most likely scenario and the riskiest scenario. The other possibilities can also be seen in the graph below. My interpretation is the same. The part generalized as natural disasters is actually also related to climate. Remember we just asked whether stupid leaders are dangerous? You can clearly see that the answer is yes. Also look at how low the probability of dying from inflation is compared with how much space it occupies in the media.

According to science, all of these scenarios are connected, and the cause of mass extinction will be a multiple-system crisis. In other words, we will slowly disappear while suffering. Maybe we are already slowly disappearing. Contrary to what leaders say, extinction caused by population distribution and declining birth rates is not even a significant scenario among the likely possibilities.

The conclusion is actually the same as always: as long as we cannot catch up with technological developments mentally, ethically, and politically, we are also doomed to destroy ourselves.

Leave a comment