No healthy person wants to kill. At their core, people are not inclined to kill or to engage in violent conflict.

When you look at diaries and notes from the world’s bloodiest wars, you see the same thing. No matter how motivated they were, in the end they say that war is not a good thing. War doesn’t end when the last drop of blood is spilled; it ends when there is agreement that fighting is meaningless. It starts at the table, and it ends at the table.

The dilemma faced by the controversial field of war psychology is exactly this: persuading soldiers—who don’t want to fight and who run from war—to fight and kill. Another “table” is what makes the dynamics of war work.

I see engineers working on war technologies caught in a similar dead end. If you talk to them individually, they say they’re against war, yet they would give everything to defend their country—but the pleasure of engineering starts to outweigh conscience.

Compared to a soldier, they are much farther from war, and therefore also far from the moral reckoning a soldier goes through. Because data comes before everything else. We will discuss this in a later article.

The ethical question here is this: why do we have to do this? Why is building machines of death so important?

First, let’s take a look at the technology. Let’s consider extreme scenarios, review possible ethical problems, weigh benefits and harms, and finally close the article by asking whether there is an ethical wall—and what work has been done on this topic.

In fact, many technologies could be listed here. The first that comes to my mind are the technologies Turkey proudly talks about—technologies that have actually existed and been used for 20–30 years—namely unmanned aerial vehicles and AI integration, which we have witnessed more actively over the last two years.

In recent years we’ve seen different forms and uses of them. We added the term “kamikaze drone” to our vocabulary.

Lethal Autonomous Weapon Systems (LAWS) are military systems that can independently select and neutralize targets without human intervention. To make decisions, they use artificial intelligence and machine learning algorithms. Beyond drone integrations, they can also be deployed in ground vehicles and fixed defense systems.

Autonomous surveillance and targeting systems are similar. The only difference is that they are used more for surveillance and data collection. In this way, they can observe, recognize targets, determine a target without human intervention, and engage the target without a second approval.

There are many similar technologies. Some provide fire support, some perform target discrimination, and some decide on their own and take action.

The most important features of autonomous weapons are that they do not need human intervention, they can be used in harsh conditions without risking human life, they make fast decisions with low error rates, and they perform real-time data analysis.

We said fast and low-error, but according to whom? Of course, according to how it is defined and based on prior data. And that creates a major risk: a wrong decision!

Imagine an autonomous weapon misreading the data and striking a civilian target. This actually happened in Libya in 2020. A Turkish-made Kargu-2 drone locked onto a target outside its given orders and hit a civilian vehicle. You probably didn’t hear about it in our newspapers—because the Turkish Armed Forces only hit the right target, right?

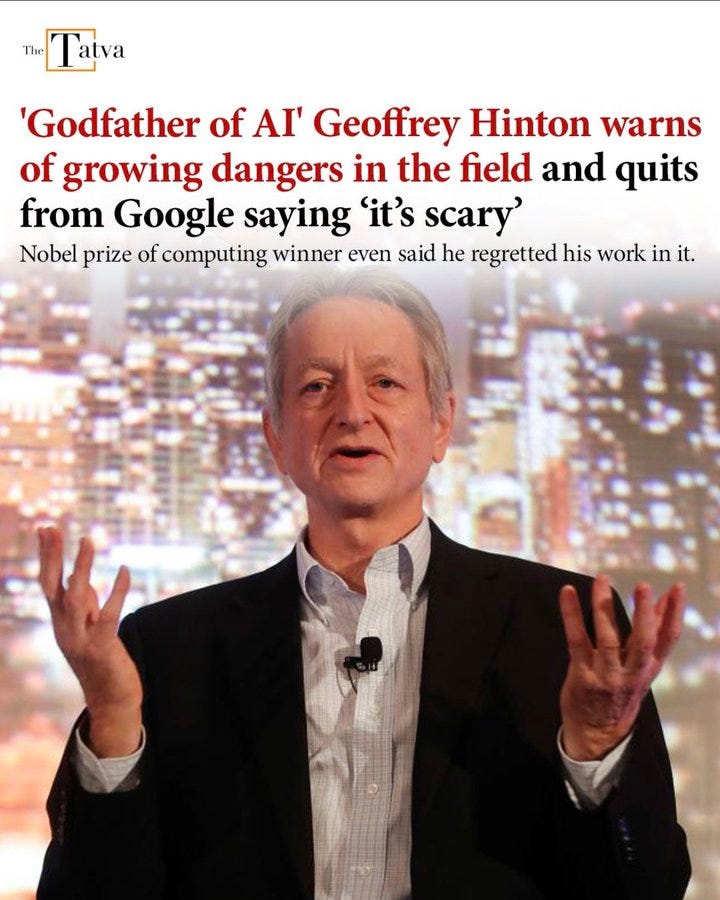

This week, two scientists received the Nobel Prize in Physics because of their work related to artificial intelligence. Both pointed to another risk in their warnings: “the loss of human control.”

When weapon technologies are involved, this risk grows even larger, because these robots operate beyond the chain of command. This also brings the risks of mass surveillance, mass killing, and absolute power through coercion.

Moreover, this arms and technology race is accelerating further and laying the groundwork for an increase in violence. Think about Israel’s pager attack—an engineering and supply-chain turning point.

One of the biggest risks is that non-state actors are also entering this race. Think about SpaceX satellites launched with the promise of providing internet to the entire world—everyone using the same internet under the same conditions. Now every war headline comes with an Elon Musk photo. Will he share information or not? Think about the power he holds. And then imagine other private companies and terrorist organizations growing stronger in this technology race. Autonomous attacks increasing and becoming cheaper… The global balance and stability could flip overnight.

Why is so much investment being made in weapons technologies?

Of course, the first reason is security and not falling behind rivals. The other reason is to increase military effectiveness by building more precise systems. This means fewer people need to go to the front, massive data can be analyzed in a short time, and stress on personnel decreases. In this way, human error is minimized, civilian losses decrease, and long-term war costs also decrease. (At the same time, these are also direct advantages for conducting more wars.)

Another reason is the arms race among major global actors. As China, the US, and Russia advance in this area, other countries want to close the gap and have a say in military affairs—at least to have leverage against these powers. In this sense, the technology race is quite “democratic” and also breaks the perception of a unipolar world.

One benefit of this approach is that the knowledge produced with so much investment and labor accelerates development in other fields as well. But having benefits does not make it reasonable to turn this into a huge race. There are many ethical questions.

Ethical Concerns

The most fundamental ethical concern is responsibility. If these weapons kill civilians or commit war crimes, who is responsible? The engineer who wrote the algorithm? The soldier who used it? Or the people who defined the requirements? Maybe the AI itself…

This uncertainty is a major dead end both legally and ethically. Current laws are extremely inadequate and broken when it comes to solving this problem.

Bias and discrimination are another risk. I previously mentioned technological discrimination. One example is a soap dispenser. While the error rate was 1% for white users, the infrared sensor reportedly made errors around 60% for Black users. Link.

AI systems produce better results the better the data they are trained on. Many studies show that facial recognition systems produce different results depending on gender and ethnicity. This gender and racial bias opens the door to disproportionate use of force against minorities. In conflict situations, serious human rights violations are highly likely.

Beyond all of this, there is an even bigger and broader philosophical question: how will a machine make a life-and-death decision—and why would it?

War is fundamentally a human activity. Leaving life-and-death decisions to machines is a major problem for traditional war ethics as well. Removing human conscience and empathy from battlefields naturally brings greater violence and devastation. Ignoring and devaluing human dignity and conscience to this extent is also a huge question mark.

Let’s also assess the seriousness of the issue from this angle: one way or another, humans think about the consequences of what they do—and they live with those consequences. Psychologically, socially, and legally, they bear that burden.

Machines, however, fight independently of all this inner accounting and future consequences. For this reason, organizations such as the United Nations are calling to ban LAWS weapons with the message “Stop Killer Robots,” and they work toward an international ban. Similar efforts are carried out by groups such as Human Rights Watch, the Red Cross, the Future of Life Institute, the International Panel on the Regulation of Autonomous Weapons, and Article 36. From time to time you may have read about hundreds of scientists submitting collective petitions, or sometimes taking to the streets.

As far as I have read, there is currently no international global mechanism on this issue. Some countries are more prominent in calling for banning these technologies or controlling them strictly. Other countries argue that military competition is necessary. This shows that even larger risks still persist.

As technology develops, lawyers, ethical thinkers, military leaders, and politicians need to work more comprehensively on this issue. There must be clear and strong regulations. The future of wars—and perhaps the future of humanity—depends on this. So when you’re praising “domestic and national” achievements, think twice.

Footnote: I think international organizations such as the United Nations, Human Rights Watch, the World Health Organization, and the Climate Action Network should have—and strengthen—their power to impose enforcement over states and the private sector. These kinds of organizations are more important than ever in today’s technology world. Otherwise, scientific warnings don’t really mean anything. They remain only as warnings.

Leave a comment